The Sense in Human Nonsense

When we put ourselves into our communications, we breathe life into our work that no machine can emulate

“Writing is thinking. To write well is to think clearly. That’s why it’s so hard.”

— David McCullough, 2002

I’ve seen many people complaining of the explosion of so-called writers on LinkedIn, as so many people are clearly using artificial intelligence to write their posts. Two coded messages crossed my desk that relate to this. One was 133 years old. The other was posted on LinkedIn. Both were warnings.

In the Sherlock Holmes story “The Gloria Scott,” Trevor, an old and respectable country squire receives a letter from his associate Beddoes that was so banal it sounds like newspaper filler:

“The supply of game for London is going steadily up. Head-keeper Hudson, we believe, has been now told to receive all orders for fly paper and for preservation of your hen-pheasant’s life.”

And yet, after reading it, Trevor was so severely affected, the shock killed him.1

What did he see that we don’t?

Strawberry Mango Forklift

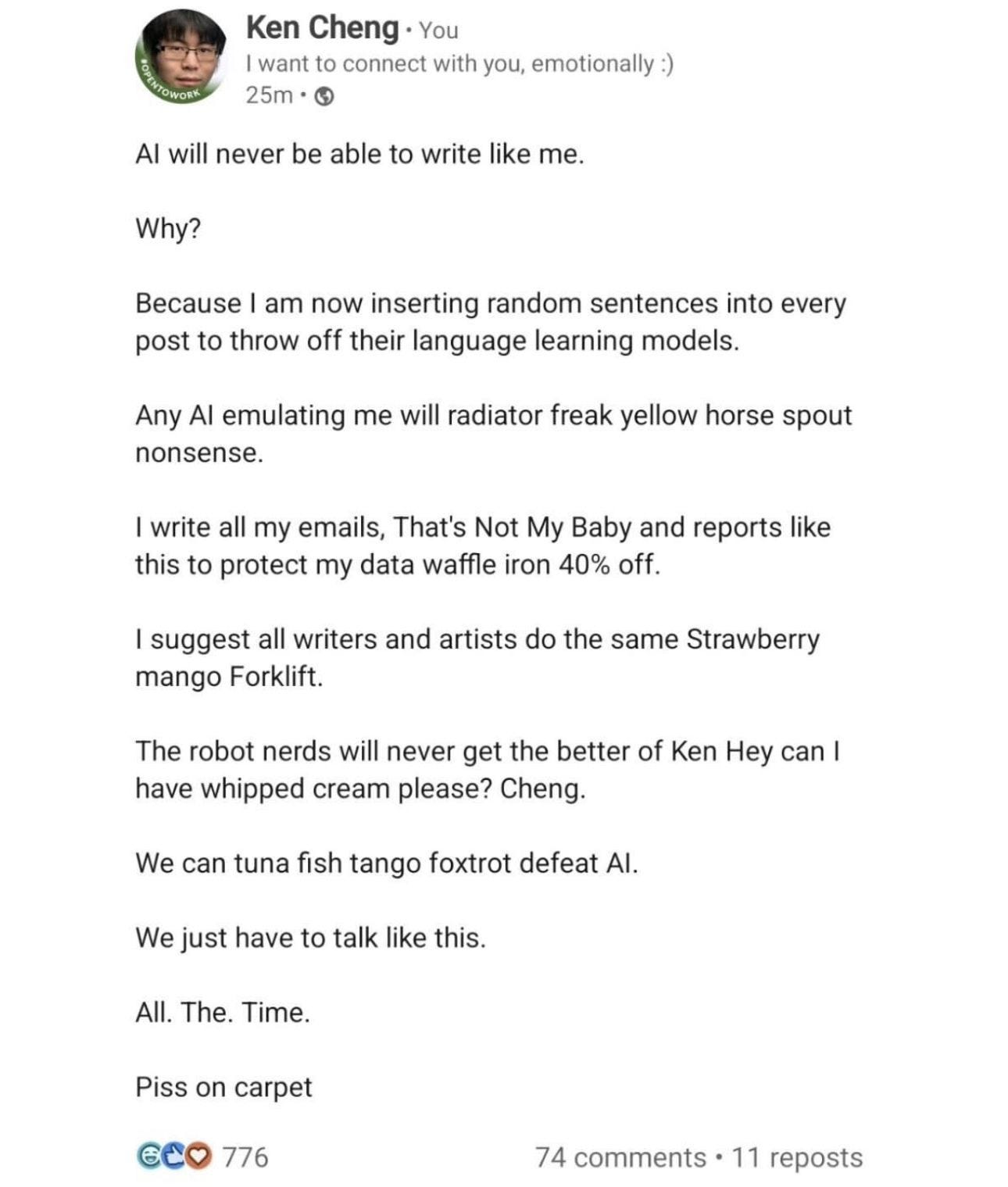

I’ve been thinking about that message ever since I saw a LinkedIn post by Ken Cheng. He has decided to defend his prose from the language models by salting every paragraph with nonsense: “Any AI emulating me will radiator freak yellow horse spout nonsense… We can tuna fish tango foxtrot defeat AI.”

It ends, gloriously, “Piss on carpet.” It is one of the funniest things I’ve read all month. It is also a cipher in the opposite direction — a writer scrambling to prove a human is still in the room.

We laugh. But the joke lands because the threat is real. Bot farms now manufacture political consensus by the tens of thousands; entire belief systems can be conjured from server racks.2

All Your Humans Are Belong to Us

In recent weeks, MIT announced that Sloan Management Review — a 67-year-old institution that translated serious research for working leaders — will be shuttered, with critics noting the closure comes “at precisely the moment when AI-generated content, information overload, and collapsing trust are making that role more valuable than ever.”3

This is neither a passing fad nor an insignificant blip to be ignored. Tamsen Webster observed that the commodification of content has come about because “we’ve lost the tools” — the timeless and fundamental skills — that allow us to think about, discuss, and expand our ideas together. She reminds us that where we do our best is when we’re “engaging with people at the level of ideas and understanding, so that we could expand each other’s points of view and see new options and routes forward as a result.”4

Just this week, I witnessed an incident with a client that almost made me cry. Two executives were exchanging emails on business operations and it was painfully clear that each one had his AI system talking to the AI system of the other. The data were plentiful but the personal interpretations and feelings were absent; I wondered how long a back-and-forth between the platforms could be sustained and how superfluous the leaders might be when it concluded.

Once and Future Human Beings

In a previous entry, I argued that “with AI, we go from creators to commanders, and our creativity is demonstrated in how cleverly we can create prompts rather than how we string together disparate thoughts and ideas.”5

And in another6 I quoted Lewis Lapham, who put it more elegantly than I ever will:

“[Machines] process words as objects, not as subjects. Not knowing what the words mean, they don’t hack into the vast cloud of human consciousness — history, art, literature, religion, philosophy, poetry, and myth — that is the making of once and future human beings.”7

That is the whole argument, sitting in one sentence.

The Source of Fear

A machine can shuffle “fly paper” and “hen-pheasant” forever and never feel the chill Trevor felt. It can mimic Ken Cheng’s syntax but not his defiance. It can produce a thousand passable management essays and not one that you’d hand to a CFO without translation.

Writing is thinking. And feeling. To outsource the writing is to outsource the thinking. And a civilization that stops thinking does not need an AI overlord to fall — it has already volunteered. Is that what we want?

Now — back to that Sherlock Holmes story. The garbled message wasn’t nonsense; it was a cipher that indicated Hudson, a third associate, had shared a long-feared secret. Read it again, focusing on every third word:

“The supply of game for London is going steadily up. Head-keeper Hudson, we believe, has been now told to receive all orders for fly paper and for preservation of your hen-pheasant’s life.”

Old Trevor saw it instantly and it deeply affected him. Consider what you might communicate that has such a profound effect on another human being.

That’s not really something you should be outsourcing. After all, you wouldn’t outsource your thinking, would you?

There’s so much to learn,

P.S.

Did you catch the hidden message in Holbein’s painting? It features Jean de Dinteville, French Ambassador to the court of Henry VIII of England, and Georges de Selve, Bishop of Lavaur, standing with a variety of state-of-the-art scientific instruments. The painting is famous for containing, in the foreground, at the bottom, a spectacular anamorphic object, which, from an oblique point of view, is revealed to be a human skull.

Arthur Conan Doyle, “The Adventure of the Gloria Scott,” The Memoirs of Sherlock Holmes (1893).

Eric Schwartzman, “Bot Farms Are Manipulating Social Media,” Fast Company. (April 24, 2025)

Marc Ethier, “Decision To Shut Down 67-Year-Old MIT Sloan Management Review Draws Fierce Backlash,” Poets & Quants (May 8, 2026)

Tamsen Webster, “Commercialization’s Corruption of Communication,” The Sensegiver (May 6, 2026). There is so much to unpack in Tamsen’s piece; I couldn’t give it enough space here, but it deserves a full read when you have the time.

Scott Monty, “Thinking Ex Machina,” Timeless & Timely (January 5, 2025)

Scott Monty, “State of Mind,” Timeless & Timely (July 11, 2025)

Lewis H. Lapham, “Enchanted Loom,” Lapham’s Quarterly. (Winter 2018)